Sansar, the platform for live virtual events originally created by Linden Lab, had something of a scare in later December 2021, when all services, including the platform’s website went off-line for a period of time. This prompted a round of speculation / rumour (and in some cases, indignation) that the platform had been shut down without warning.

As we all know, Sansar went through an intensive development cycle from 2014 through 2017, prior to being (prematurely, I tend to believe) launched on the world at large. It then – being brutally honest – went through a period in which Linden Lab flip-flopped in direction and purpose for Sansar as the bubble of hype surrounding consumer-based VR (unsurprisingly) burst. Finally, in March 2020, after having laid-off the majority of staff involved in Sansar at the end of 2019, Linden Lab sold Sansar to what appeared to be a start-up company, then called Wookey Projects (eventually to be come Wookey Search Technologies Corporation (operating as Wookey Technologies). For a time, this seemed to bode well for Sansar’s future: the majority of laid-off staff were re-hired, development work resumed, etc., and Sansar event hosted some large(ish) events in 2020, such as Lost Horizon’s Glastonbury Shangri-La. But really, Sansar continued to struggle to build a genuine audience.

Then, on Wednesday, December 22nd, Sansar’s presence on the web abruptly vanished, with the website returning a 404 error, and the client left unable to connect to any servers. As the hours passed, so speculation grew that perhaps the plug had been pulled, particularly in light of the comments that had been doing the rounds that all Sansar technical staff had been placed on extended furlough since some point in September 2021 (which, given the company’s total number of direct employees is put at just 22 by RocketReach and others, could represent a something of a significant portion of staff).

Having heard the news about the outage, I decided to wait things out for at least 24 hours to see if the situation changed, or if any announcement were to be made. As it turned out, things did start to change in that time, as Sansar’s services began to come back on-line on December 24th, starting with the sansar.com website, with the avatar service about the last visible service to re-surface prior to log-ins resuming.

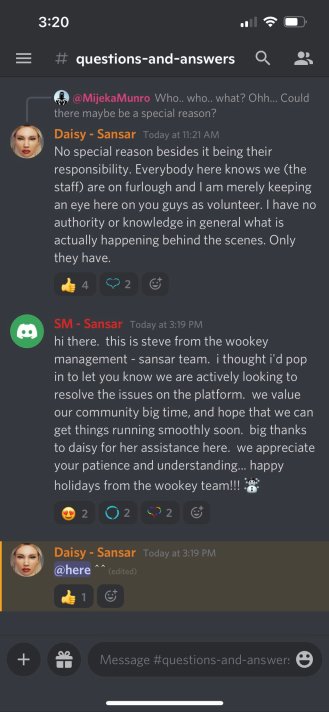

Exactly what went wrong is unclear – being completely transparent, I’ve not actually been actively involved in Sansar since the end of June 2020 – but so far as I’m aware, nothing official has been stated beyond a Discord channel statement from “Steve from the Wookey management – Sansar team” (displayed on the right), from Steve Moriya. VP of Business Development (with thanks to Dave for the pointer).. Given we are on the year-of-year-break; perhaps something will be stated in the New Year – but time will tell on that.

However, the outage did prompt me to return to information that came my way in September 2021, and which I (again) opted to withhold from blogging about at the time, in order to see how developments progressed.

Coming by way of Cain Maven, a Second Life creator and individual not given to hyperbole or rumour, that information was / is that Wookey Search Technologies Corporation is engaged in a long-running dispute with its former CEO, Mr. John Fried. He has been seeking recompense from the company that could amount to something over US $1 million.

Companies being sued by former employees isn’t precisely unheard of in the United States (Linden Lab themselves has faced it – or the threat thereof – at least once), and can be subject to settlement without ever reaching a formal court case. Hence why, when Cain pointed me to the matter, I decided to hold off on commenting, and await developments.

However, case number CGC20584302¹, filed with the Superior Court of California, San Francisco, does now appear to be on course for a potential trial by jury, following demands to do so were filed with the court by both parties at the end of November 2021.

The situation has been developing since shortly after Wookey acquired Sansar in 2020; at its heart, is a claim by Mr. Fried that Wookey failed to honour commitments they allegedly made to him concerning salary and reimbursement of relocation fees (from Florida to California), and further failed to honour an alleged US $1 million bonus payment for which he is also seek recompense. It’s a case that has seen both sides file claim and counter-claim, motion and counter-motion; but as noted above, both parties filed demands to move to a trial by jury in late November 2021. As a result, the court issued a Notice of Time and Place of Trial at the start of December 2021, and unless anything else arises, the trial will commence: June 27th, 2022, and it will be heard in the court of the Honourable Suzanne Ramos Bolanos.

That said, a settlement may still be reached – a conference to attempt to see if this is possible remains scheduled for March 16th, 2022, having been postponed from its original December 8th, 2021 date. But the In the meantime, both parties are moving to the Discovery phase of the case – which may also bring requests for continuance before the judge, further delaying the trail, or altering the direction of the case.

I offer no direct conclusions on the matter here – I’m not a lawyer, after all. However, given Wookey Search Technologies appears to be valued at around – as of September 2021 – US 3.78 million, were the case to go all the way, and a judgement made in favour of Mr. Fried, it could put a significant dent in the company’s finances. As such, I’ll continue to track the case and look to provide updates in 2022.

- Note that this website requires multiple responses to a Captcha system, and can time-out relatively quickly, requiring additional Capcha confirmations.