On Monday, June 26th, Linden Lab issued the Inventory Extensions project viewer, offering two new inventory features intended to make browsing inventory and inventory folders and ascertaining what they / their contents are a lot easier. An official blog post accompanied the new viewer, and this post is intended to offer a little meat an bones on that post for the curious.

The new capabilities comprise:

- Inventory Previews (previously referred to as Inventory Thumbnails):

- The ability to take images of individual items within inventory (clothing, body parts, accessories, attachments).

- Have these images persistently linked to the item (unless intentionally deleted or changed) and displayed when the mouse pointer is hovered over the item in question.

- The ability to use your own images, either from inventory or uploaded through the preview tool, which can be persistently associated with the item when the mouse pointer hover over it.

- The ability to create and include images for other inventory asset types such as Calling Cards (e.g. a photo of the person to whom the card relates), EEP Settings, Landmarks (e.g. a photo of a location), Notecards, Gestures, Scripts, etc.

- The ability to associated images with an entire folder (e.g. a photo of a complete outfit contained in a folder).

- There is no fee associated with creating such preview images, whether taken using the in-viewer tool or when uploading your own image via the tool (note the fee will be applied still if you use the Build → Upload Image option).

- Merchants and creators can add previews to their delivery folders and items, and these will be automatically displayed on mouseover with the item / folder.

- Single Folder view: the ability to see the contents of a single inventory folder in its own window.

- As far as I’m aware, these previews should not place any overhead on inventory loading.

Please note: at the time of writing, these features are only available via a project viewer available via the Alternate Viewers page, and thus should be regarded as being for testing purposes, and they may be subject to further iterations / changes between now and when they do review a de facto release status. Those trying the viewer are encouraged to file any bugs they may find via the Second Life Jira.

Inventory Previews

Creating a Preview via the Preview Snapshot

- Select (and wear / display, as applicable) the item for which you wish to create a preview image. In this example, I’m using a hairstyle.

- If you are creating a preview of an item of clothing or other wearable, you might want to pose your avatar (although this obviously isn’t essential).

- Position your camera so you are ready to take your preview image.

- Open your Inventory and locate the item for item for which you wish to create a preview image and right-click on its name to display the Context Menu. Select Image… from the menu.

- This will open the Change Item Image floater. To take a preview image directly, click on the Use Snapshot Tool button on the floater (second button from left, with a camera icon).

- When using the Snapshot Tool, clicking the button will open the Item Snapshot floater:

- Use the Take Photo button to refresh the preview of the image about to be taken, if required.

- When you are satisfied with the preview image, click Save.

- The preview image will be generated and automatically associated with the inventory item.

Viewing A Preview Image

- In Inventory, hover the mouse over an item.

- If there is a preview image set for it, it will be displayed whilst the mouse remains over the item.

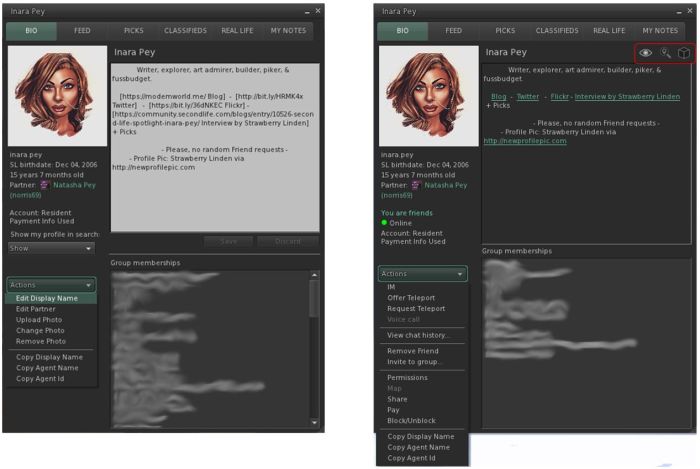

Buttons on the Change Item Image and Item Snapshot Floaters

The image below provides additional descriptions for those buttons on the Change Item Image and Image Snapshot floaters which might not be obvious at first glance.

Additional Notes

- When using the Upload From Computer and Use Texture (e.g. a snapshot saved to inventory or other texture in inventory) in the Change Item Image floater, there is no need to take an additional snapshot using the floater – once displayed in the floater, the image will be associated with the item.

- To create an image for an entire folder in inventory (such as an outfit), follow the steps for Creating a Preview via the Preview Snapshot, but right-click on the folder itself to select Image… from the Context Menu.

Single Folder View

This option allows you to see the contents of a single inventory folder in its own window.

- Locate the required folder in inventory.

- Right-click on it to display the Context Menus and select Open in a New Window.

- The contents of the folder are displayed in a new floater:

- Any items with associated preview images have said images displayed in the upper part of the floater.

- Items without any associated preview image are listed in the lower portion of the floater.

General Notes

There have been some claims that the Inventory Preview is an attempt by LL to move in on popular products such as CTS Wardrobe; however, these claims are not really accurate. This kind of inventory preview has long been requested for the viewer, whereas CTS and similar are geared towards web-based inventory organisation (and may require the use of RLV), whilst offering a more rounded feature-set in terms of tagging and other capabilities. As such, they are not in any way invalidated by the release of this functionality, and there are those who may find such systems remain more attractive as an option.

That said, the Lab’s inventory preview is a useful feature, and one that will hopefully be used by content creators as well as by users in general. The single folder inventory view is also useful, although I have a minor niggle over consistency of naming (if the capability is called Single Folder View, how about calling it that in the Context Menu?).

Currently, and as noted above, both features are still in development, and hence only available through the Alternate Viewers page at present, and may be subject to change. Depending on what, if any, changes are made, I may revisit this viewer and provide a further piece on it once it reaches de facto release status.