It’s been a while since I poked my head into Sansar; I’ve been watching the events page to see what is going on and the COMETS – users engaged on the platform – have been doing a sterling job in trying to provide a nucleus of a community and running events among themselves. Product Meetings have been few – two since Wookey took over things, one of which I could attend, and one since then; hopefully more will come along as the Wookey Team settle in to remote working – something that was still being set-up at the meeting I did made.

However, a comment passed at the meeting I did make has come to pass: as of June, Monstercat, the Canadian electronic dance music (EDM) is back to running events in Sansar, with their Call of the Wild events now scheduled on a weekly basis. It’ll be interesting to see how these pick up again; prior to the hiatus, they were (on a, I believe, monthly basis) generating around 1,300-1,500 “interested” responses.

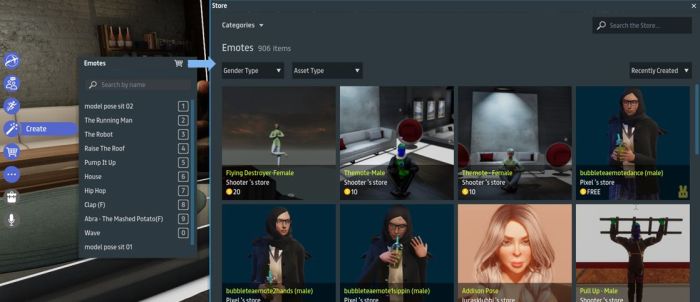

May and June have seen a couple of platform updates – release notes here and here. Both offer continuing support for events, with the most recent also featuring a revamped Nexus and updates to the user on-boarding process. Other updates among the recent pair of of releases include new events-oriented templates creators can use for their own events, ability for world owners to mute all voice users in their environments, additional shaders and bug fixes, but I’ll be focusing here on the Nexus and on-boarding.

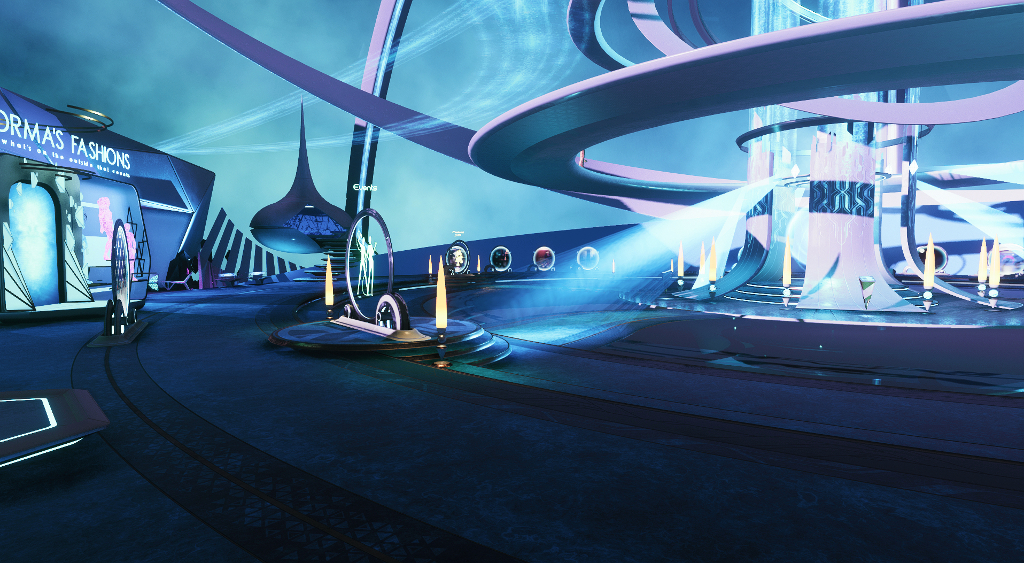

The Nexus Reloaded

The Nexus has received a significant overhaul whilst also retaining much of its look and feel. Major changes lay in the removal of Agent Prime and any hint of Sansar Quests (although Agent Forma remains in club Forma (she’ll just politely tell you to bugger off when touched).

The Prime Portal central area remains, again with a new look, while the portal itself is gone, as is the lounge area above it. Replacing the Prime Portal is a new event-focused portal that leads to the currently-promoted major event or, if the event is not currently live, provides the option for people to register their interest in attending and to have it added to their calendar. At the time of my visit, this was promoting the Lost Horizon event Sansar is hosting with Glastonbury Shangri-La (read more about this in Glastonbury comes to Sansar for 2020).

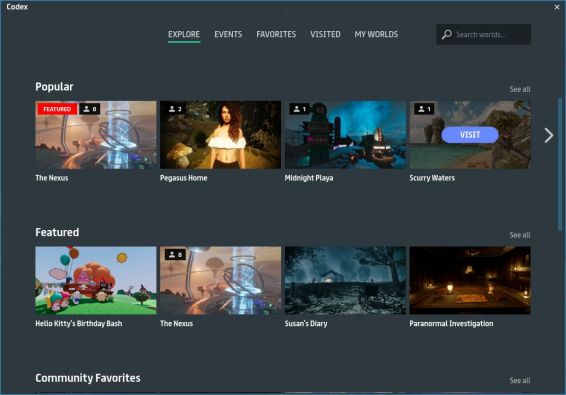

Searchlights swing back and forth from the central portal area to illuminate the four major portals around the outer walkway. These remain pretty much as before: Monstercat, Sanrio World (Hello Kitty) and a link to open the Popular Places section of the Codex (if you’re a Second Life user, Codex = Destination Guide with added functionality), with the forth offering a teaser for Bootshaus (which might be some kind of virtual tie-in with Bootshaus.tv?).

To further encourage general exploration, the Nexus now features an outer “portal wall”. This features a ring of portals leading to the more popular user-created Sansar Experiences. I’m unclear as to how worlds are selected for these portals – I assume there is a process in place – or how / when the destinations to which they point might be refreshed.

On-Boarding Process

The revamped on-boarding process is stills 4-step affair: download the client, create and account and verify e-mail, select an avatar, get dropped into the Nexus. With a notable exception, the majority of the changes in the latest release appear to be cosmetic: the installer, updater and log-in screen all now share the same graphic that appears to have been largely inspired by the cinematic version of Ready Player One.

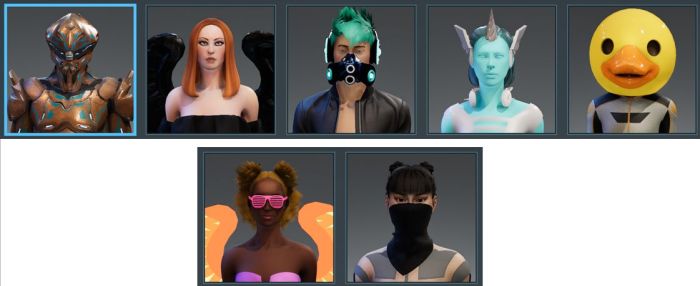

That exception is the avatar carousel, which sees a new set of seven avatars for new users. Unsurprisingly, the focus is on human avatars with three male and three female in casual / clubbing garb. They are rounded-out by one non-human (but humanoid) robot. I’ve no idea if these are purely Sansar Studios created avatars or if some have been selected from the work of creators (as has been the case in the past).

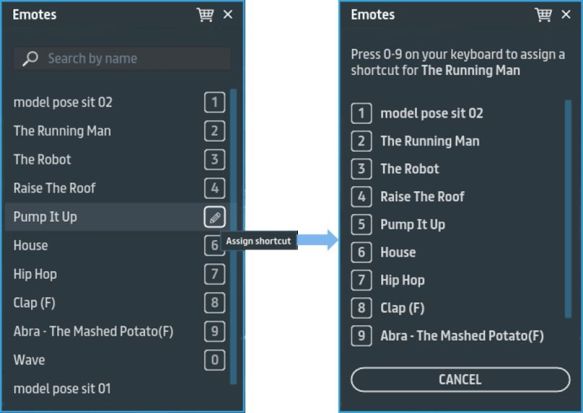

Additionally, it would appear that the “getting started” pop-ups have been overhauled to look a little brighter. These are again minimal; all I’ve encountered is how to move and how to emote (play animations) – which is fine for getting around and bopping on a dance floor – but what about things like text chat for those who don’t have a microphone (the latter is at least intuitive, having its own UI button)?

And how about at least giving new users a clear hint that they have a home space? If nothing else, a pointer to the latter might pique the curiosity of some coming into Sansar for an event and get them to come back and have a look at what it is, and so help kick-start them into wider exploration.

New User Experience – Digital University

While not an official undertaking by the Sansar team, when it comes to user on-boarding, the Sansar Training Grounds 101, created by experienced Sansar User ZeroCheese for the Digital University, does offer a more rounded opportunity for new users to more fully get to grips with the client.

Available through the Codex and (currently) featured at the Nexus, this experience offers a guided walk-through of core aspects of using Sansar – movement, manipulating objects, sitting, using emotes (animations) – which includes an introduction to the idea of using the Sansar Store, using voice and text chat, and using the UI. True, not absolutely everything is shown – but frankly, it doesn’t need to be; what is there is sufficient to allow a new user to get on in Sansar, with the rest open to being learned / discovered in time.

This latter aspect of gaining familiarity with a platform over time is something that often gets overlooked in discussions about the “new user experience”. All too often it seems people get too caught-up in believing everything must be laid before the new user – regardless of the fact that it likely took them weeks or months to understand all that they now want to push onto the new user in a single serving. That it might actually make things harder for said user, and add to the belief that a platform has a “steep” initial learning curve, is often overlooked.

As it is, Training Ground does a pretty good job of covering the vital basics whilst also pointing the way for the user to poke at the client UI and discover / learn about it on their own. One or two aspects could perhaps be a little better: the initial walk training seems excessive, whilst the need to land fruit in the basket is required in order for the next lesson to be reached could be better emphasised. However, what is provided is more than enough to allow a novice user to be able to more fully enjoy Sansar. If only it were more front-and-centre at the Nexus to encourage interested new users to give it a go.

General Observations

The new look Nexus does what it says on the tin – and by offering more in the way of portals to experiences, it appears to have overcome something of the past critique that it was too much of a bottleneck that stopped incoming users filtering through to other locations on the platform. Certainly, during all of my most recent visits over the last few days, the number of avatars within the Nexus have been nowhere near as lose as they were in the back-end of 2019.

The new avatars are, I would suspect, bland when it comes to those familiar with platforms like Second Life. However, they do fulfil the need to offer events-oriented avatars to incoming attendees, and this should be borne in mind. Hopefully, more will be added to the mix; seven is a very small number, and were an event like Lost Horizon to generate a lot of interest and engagement from newcomers, then leaving the choice of avatar to just those seven could lead to such an event looking something like a clone fest.

Overall use of Sansar currently appears to remain somewhat below pre-sales levels with most activity being driven by the COMETS, as noted above. It’ll be interesting to see how much impact on peak levels of activity the renewed Monstercat events have, and what the upcoming Lost Horizon event does for Sansar’s visibility.