On Thursday, April 9th, Sansar held its first product meeting since being acquired by Wookey Project Corp (see: Sansar: looking at the apparent new owner – Wookey Projects Inc. and Linden Lab confirm the sale of Sansar to Wookey Project Corp – updated). The meeting was really a means for the team to say “hi! We’re here!”, rather than providing a huge depth of hardcore information, although there were some Titbits of news.

The following is a summary of the key points.

On Wookey and Sansar

No-one from the Wookey management team was present at the meeting, in part because they have yet to have avatars made; however, their presence is expected at times in future meetings, when they’ll be able to talk more about Wookey Project Corp and their goals / view of Sansar.

A shout-out from the Sansar team was given to Sheri Bryant. Sheri (aka Cowboy Ninja in Sansar) was the General Manager for Sansar at Linden Lab, and she is credit with leading the work in bringing about the deal between LL and Wookey Project Corp. She has also moved to Wookey, where she remains in charge of Sansar.

Many of the team are already with Wookey- attending the meeting were: familiar names: Binah (UI Engineer); Aleks (Product Manager); Galileo, Lacie, and Torley, (Production Director, Sansar Studios). While he wasn’t that the meeting, Boden was also mentioned as having joined Wookey as well, retaining his position as a Product Manager.

Also joining the meeting were some new (to me at least) names: Cynno (Art Production Manager Sansar Studios) and Sansar Studios (Colin – the Creative Director at Sansar Studios), both of whom report to Torley; together with Colo, the Director of Engineering and the Release Manager, and Steel, one of the QA Engineers.

Many positions are still being recruited into, and it was indicated that there are a number of engineering team positions that need to be filled, and these and the SARS-CoV-2 situation are slowing the full resumption of work on Sansar. In this latter regard, the Sansar team was less distributed than the SL team while at Linden Lab, with most of them office-based; this means equipment, etc., has been / is being sourced and shipped to their home locations to allow them to start remote working.

The meeting was also an opportunity to say farewell to Galileo as the Sansar Community Manager, who is departing the Sansar team as a result of having accepted a new position with Pocket Gems, a games development company. This means Lacie will be taking over as the official Sansar Community Manager, although Galileo will continue to be an active Sansar user and involved in the COMETS programme.

Roadmap

As has been indicated through various sources (see the Lab’s press release and the Sansar blog post both announcing Wookey’s acquisition of Sansar), the emphasis remains on building Sansar as an events platform that will attract “thousands”.

There is no public road map as yet, although it is promised that one will be produced – probably not as granular as the internal road map – and might be available in two weeks time at the next Product Meeting. However, current areas of focus comprise:

- Continuing on from where things were left off.

- Moderation tools development and deployment.

- Narrowing the new users on-boarding experience – making it easier for people to get from sign-up to event; improvement the tutorials, etc.

- Working on stability improvements for events.

- Tip jars are seen as being on the “tail end” of the moderation / on-boarding work.

However, it will take a little while longer for work to ramp-up once more due to both the current SARS-CoV-2 situation and the need to recruit additional personnel.

Avatars, Vehicles, Edit Tools Improvements, etc

Due to the current state of play, a lot of the planned / promised engineering / dev work has already been pushed back further in the road map. Specifically mentioned in this regard were:

- Avatar improvements.

- Vehicles.

- Edit Mode improvement such as folders for items, etc.

- Allowing world creators to nominate “admin staff” to help run their worlds.

- Creator access to the Backpack.

- Collaborative building.

Sansar Mobile Service

Back when Sansar had yet to début, there had been talk of the platform being accessible from mobile devices. Ultimately, this got pushed to one side – but is now something of a priority.

Not much can be announced at this point in time other than:

- It will most likely be a streaming service, initially for iOS and Android.

- It will not (initially at least) support VR headsets like Oculus Quest.

- Precise initial capabilities are still TBD.

- Again, no time frame on when any first cut might appear, nor have potential fees been set.

Event Partners

- MonsterCat, Roddenberry Entertainment and Fnatic will be returning to Sansar to host events.

- There have been continuing talks with musicians and “major names” about coming to Sansar to perform. None of the specifics are ready to be announced as yet, but some may be ready by the time of the next Product Meeting.

General Notes from the Meeting

- Support tickets before March 24th, 2020 need to be resubmitted, if still relevant.

- The partnership with Marvelous Designer™ should continue as before.

- Two-factor authentication for Sansar is “on the list” of things the team would like to implement. However, no time frame on when it might start to surface.

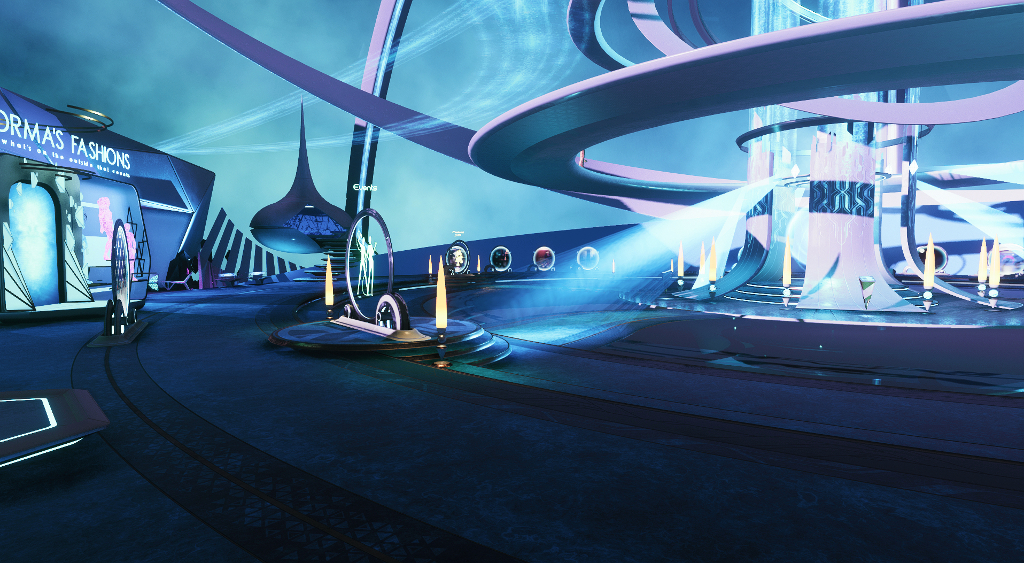

- The entire approach with the Nexus is to be re-examined, specifically with the view to making it more linear for incoming users to get from it to the event they wish to attend / the most popular locations in Sansar.

- It is still planned to eventually extend the ticketing system so that world creators can use it with events they organise / host.

- The Sansar team is looking into improved documentation sharing, tutorials, offering tips and tricks through a wiki-style environment, world templates, etc.

Possible Changes to Accounts

- Wookey / Sansar looking at things like the partnership with Steam, subscription options, and how they are structured, but nothing to announce.

- The number of worlds a Free account can publish may be revised in the future. Whether this will mean those who have already published multiple worlds will be allowed to keep all of them or not if they have published worlds beyond the new limit, is TBD.

- There will likely be a tightening of requirements for users organising Sansar events (e.g. events may only be hosted by “authorised / approved / trusted” – details TBD – accounts).