The following notes were taken from the Twitch stream recording of the December 19th (week #51) Sansar Product Meeting.

Point Releases for R38

There have been three point releases for the R38 “Rediscover the Party” since December10th, none of which have had release notes posted to the Sansar website. The core element of these point releases was bug fixes and performance improvements.

Linden Lab and IP

There has apparently been a lot of discussion about (and confusion over) the Lab’s approach to IP protection on Sansar. As a result, Lacie Linden has published A Word About IP on the Sansar blog. This specifically references Linden Lab’s

Both of which can be found on the Lab’s corporate website, and which apply equally to all of the Lab’s platforms.

Plans for 2020

Emotes

- Updated emote (gestures/ animations) system coming “soon”. This will include the ability to obtain an emote from the Sansar Store and immediately use it and assign a keyboard short-cut to it.

- Emotes will also be per account in the future, not per avatar – a move intended to remove the need to re-apply emotes to each avatar a user creates.

- A downside of this is that when first introduced, the new emote system will mean script will no longer be able to access the number keys without triggering an emote.

- However, a means for users to re-bind keys to whatever they want will be provided, so scripts will not longer be pointing at a specific key, but rather an identifier, which can have any key assigned to it.

Animations

- Synchronised dancing for music events – probably built into the dance floor, which they set avatars dancing, rather than using emotes.

- API will allow for both full body and upper body looping and / or single play. This won’t interfere with people wanting to use their own dances.

- This system will also support other uses with animations.

- The default idle animation is to be improved.

Backpack

The Backpack is to be extended so that individual scenes (worlds) can define what the Backpack contains. This will include a scripting API to define the state / content of the Backpack at different points in a world (e.g. reach a point in a quest where you have obtained an item, and it is unlocked in the Backpack and available for use).

New User Experience / First 10 Minutes in Sansar

This is to be entirely re-thought, with changes to the initial on-boarding quest experience. This is to align the on-boarding experience more with music and live events – the current focus for Sansar development and audience acquisition.

Moderation Tools

These are to be enhanced, initially for Lab staff but then for world creators – e.g. muting people more easily, removing them from a world, etc. Together with in-client improvements to managing whitelists, etc., and for raising tickets.

Avatar

- The ability to upload and use custom skins will hopefully be one of the first early avatar updates for 2020.

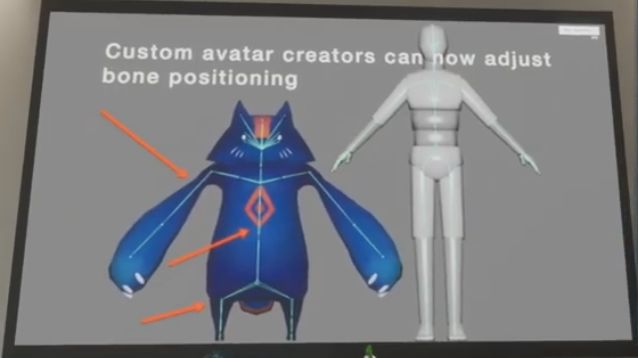

- A lot of work has been completed on the full body deformation capability, but it is not clear where the work sits in the work order for 2020.

UI Re-design

There will likely be a “comprehensive” client UI redesign in 2020, aimed at things like reducing the number of clicks required to get to certain capabilities, improving the information available in certain panels (e.g. local chat), with options to use this information to access other panels (e.g. access a user’s Profile from local chat and then adjust the volume at which you hear their voice chat).

This work is forming the “tail end” of a lot of “other, bigger changes”.

Q&A Session Summary

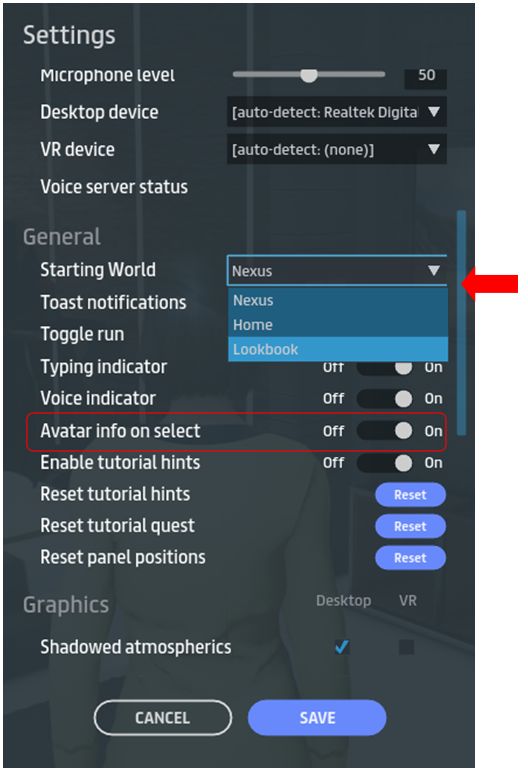

- There have been reports of issues with the Look Book – items not updating what changing an avatar; saving updates taking a long time. There has been some work going on with the look Book, and this may have caused a few bugs, which are being / have been addressed by the Sansar team.

- Nexus sounds: the background sounds (referred to as the “old sounds” are interfering with the music, etc.. These are to be removed.

- The bug by which a blocked individual can continue to harass in local text chat is being investigated.

- The Sansar team is looking at a lot of the pain points within the content creation process in order to try to smooth the process end-to-end and make it easier for content to be brought into Sansar and used to create scenes and worlds. This work may encompass things like UI improvements and allowing creators to version their worlds.

- Will there be an AFK indicator when people are absent their computer? Being considered, but not clear if this will be SL-like or something like returning an avatar to their Home Space after a certain amount of time inactive has passed.

- Tipping: is also being looked at, but may be driven from tipping “official” performers rather than a generic person-to-person exchange of Sansar Dollars.

- A Sansar mobile client is still on the road map, but no significant updates at the moment other than it is being worked on.

- Why is is still necessary to run events in a copy of a world, rather than the original? This actually goes back to the way in which the ticketing system works – and this is frustrating for the Lab and world creators, as it is still inward-facing (creators cannot as yet make use of it for generating revenue off of their own events).