The Content Creation Improvement Informal User Group has started work on putting together an exploratory proposal for consideration by Linden Lab on the subject of morph targets.

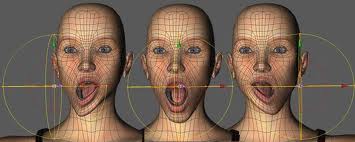

Morph targets (also known as shape targets, per-vertex animation, blend shapes, shape interpolation in some 3D applications) are generally used for complex animations that would otherwise be hard to accomplish with skeletal animation – such as facial animation and avatar customisation. In this latter respect, the SL viewer already uses morph targets to a degree. The proposal being drafted is aimed at the implementation of a more widespread use of morph targets, for use in such areas as:

- Complex facial animations on rigged meshes

- Additional ways for a user to customize the appearance of their avatar

- Animating the surface of a prim without the need to use a custom skeleton

- Fine grained control for content creators over how their clothing and avatars deform

It is with regards to this last bullet-point that morph targets are particularly interesting to content creators, as they are seen to have significant potential advantages for clothing deformation than might otherwise be offered by either the parametric deformer, or RedPoly’s alternative approach.

The proposal outlines some of the drawbacks in the latter two approaches, and covers some of the advantages and issues in adopting morph targets. One advantage in using morph targets is that they would allow a content creator to “sculpt” how a morph target should appear, directly within their 3D application of choice, thus giving them the ability to directly control over how a mesh deforms around an avatar (or how a rigged mesh replacement avatar deforms around the base avatar). A potential issue with the approach is that morph targets require additional information to be encoded either within a mesh, or within a texture. This means that additional bandwidth will be required to transmit any mesh which uses morph targets.

As it stands, the proposal is in its early phase, although the intention is to complete it and submit it to LL for consideration and feedback as soon as it is felt enough information has been put together. Geenz Spad, co-chair of the CCIIUG, is aware that at the moment, much more input is required in order to get to that stage.

“There could be more input with regards to the content creator’s and the technical perspectives,” he commented to me in discussing the proposal, “The biggest bottleneck is just getting enough input to finish the proposal at this stage.” Of the two perspectives, Geenz feels that it is the technical side of things that is perhaps the more lacking of the two and he would like to see more input from those of a technical mind in terms of potential feature implementation, advantages, disadvantages, possible issues, and so on.

If you are in a position to provide input to the proposal itself, your views would be most welcome, as would your presence at the weekly CCIIUG meetings, which take place every Tuesday, from 15:00SLT at the Hippotropolis Auditorium.

Related Links

- Content Creation Improvement Informal User Group (SL wiki)

- Morph targets proposal (please do not edit without speaking to Geenz Spad / Siddean Munro)

- What are morph targets? (About.com)

- Morph target animation (Wikipedia)

- CCIIUG articles in this blog

I think they should definitely apply them to standard avatar’s facial expressions, or at least augment the capabilities that already exist as far as facial expressions. The way it is now, the expressions are not very subtle or easy to control.

It would be awesome to have them for mesh etc. too!

LikeLike

I wish they could have much better standard animations!

LikeLike