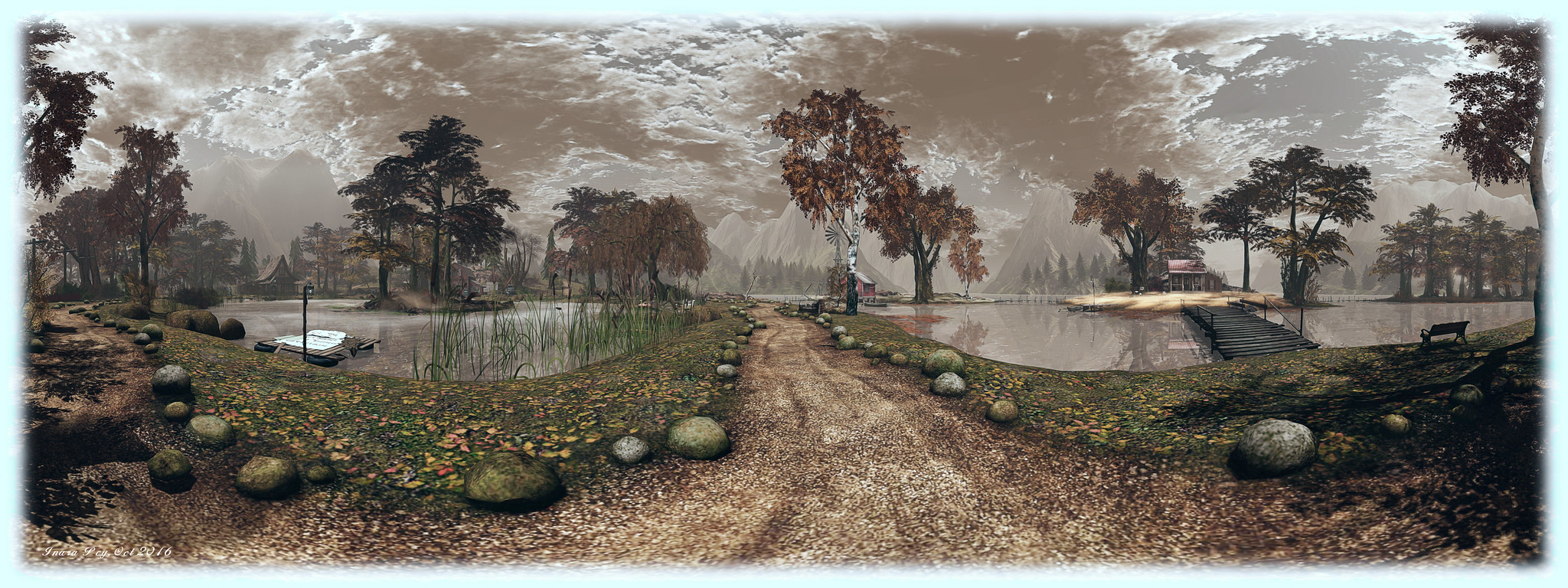

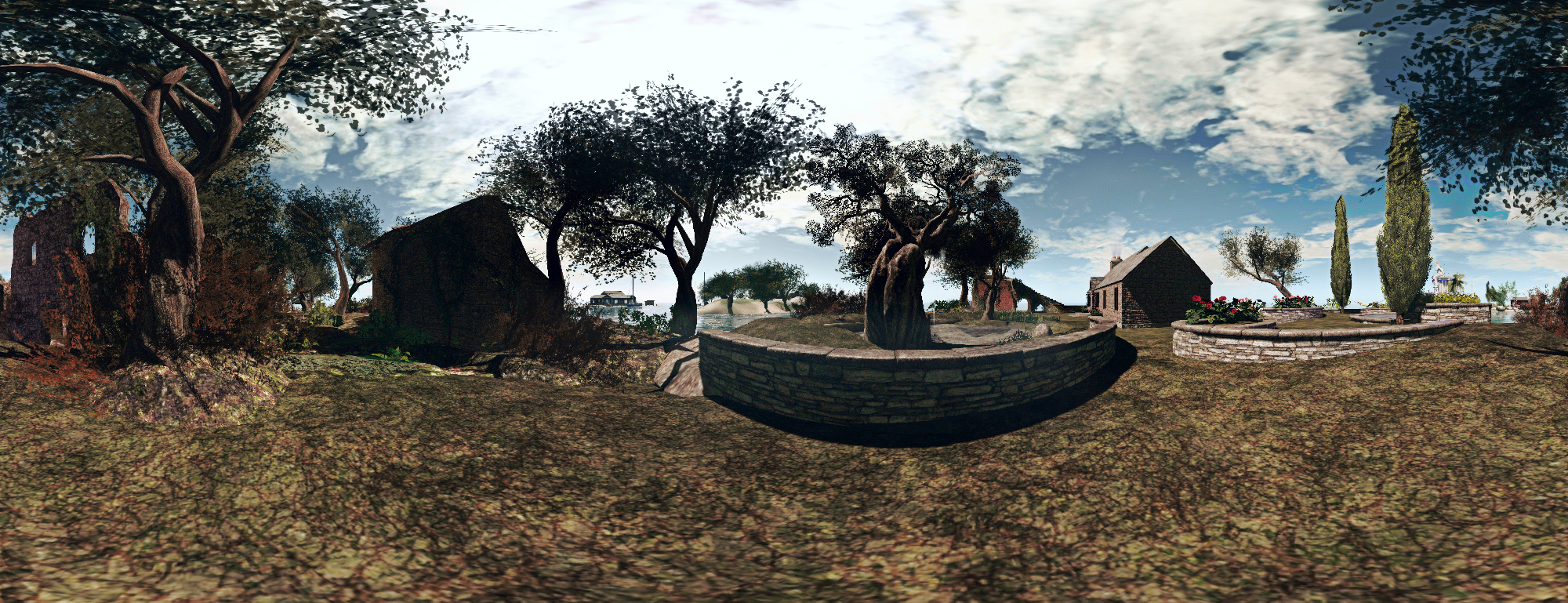

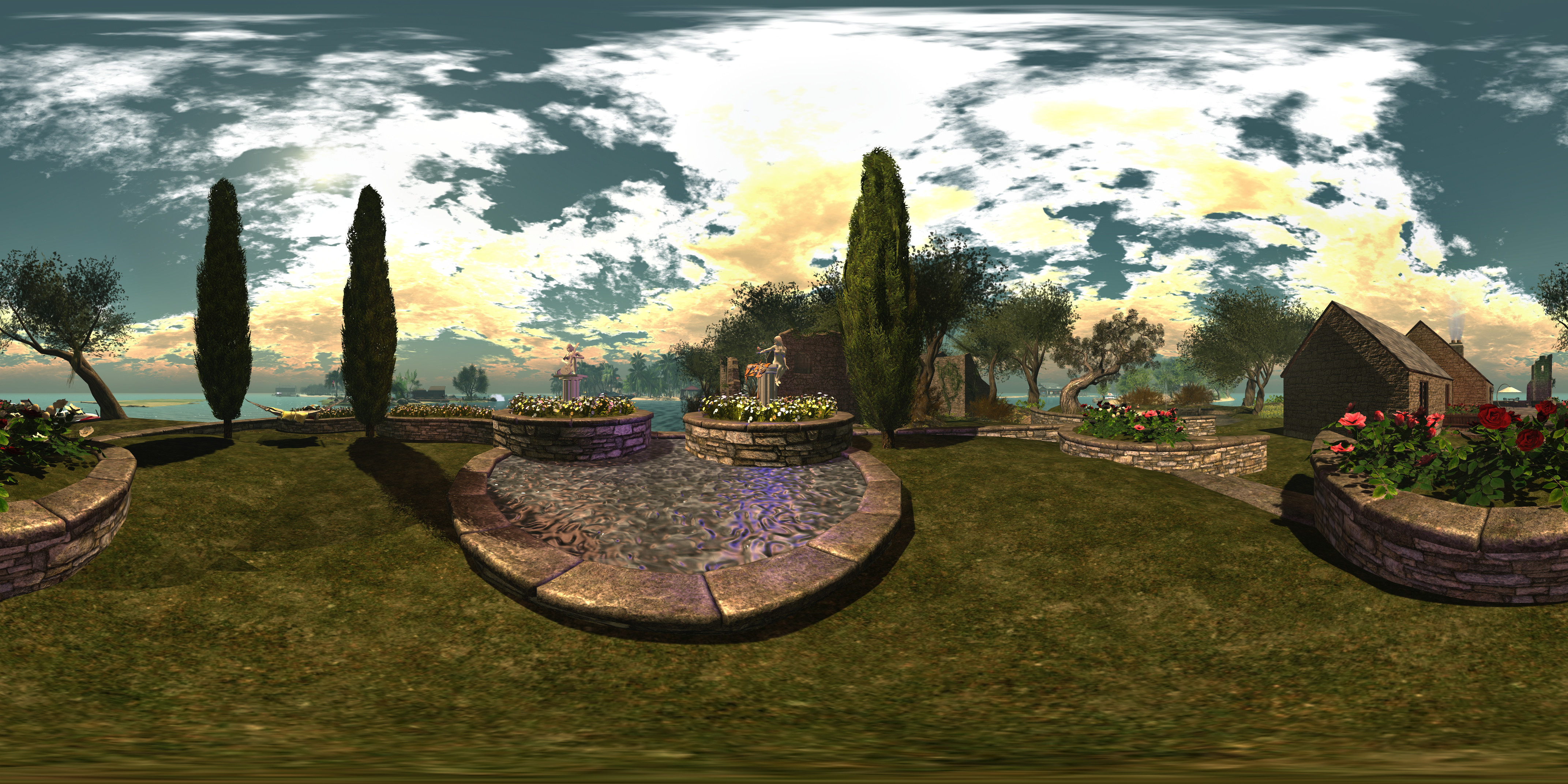

Camera Panoramic 360o spherical demonstration via Flickr – click to view with 360o scrolling

Camera Panoramic 360o spherical demonstration via Flickr – click to view with 360o scrolling

Following my look at the Illiastra Panoramic Camera HUD, Lalwende Leakey invited me to try the HUD system she has been developing for 360o cylindrical and spherical images from within Second Life.

Called Camera Panoramic , the system is a comprehensive package for producing cylindrical (rectangular) images, full 360o spherical images suitable for upload to Facebook, VRchive, Flickr and other platforms or uploading as 360o videos on YouTube, as well as planar images and 360o “little planet” images. A set of image presets are coded into the HUD to make taking shots easier – including the ability to take “selfies” of your avatar in 360o views.

Full documentation for the HUD is available on the web and via a downloadable PDF file file. In this article I’ll be looking at some of the core aspects of the system and running through how to produce a 360o image for uploading to Flickr.

The HUD

The Camera Panoramic HUD comprises 8 buttons, summarised below and described in detail in the supporting documentation.

Note that some of the camera placement buttons may appear greyed-out; this is because they are toggle activated; clicking one shows it in blue (active), while the others turn grey – as is the case with the camera placement options in the image above. The camera image options are only available when the HUD is capturing images.

All of the options can be accessed by gesture-driven hot keys and chat commands on channel /3, as detailed in the camera documentation. I’ll be referring to the HUD buttons throughout this article.

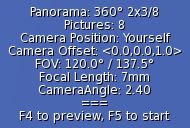

When worn, the HUD also displays information in text. Again, full details on this information can be found in the camera documentation, but in brief, the information comprises: the image type you’re using (cylindrical or 360o) with the selected preset; the number of pictures to be captured: whether you are using a camera offset (X,Y,Z axis), and the required camera defaults.

When worn, the HUD also displays information in text. Again, full details on this information can be found in the camera documentation, but in brief, the information comprises: the image type you’re using (cylindrical or 360o) with the selected preset; the number of pictures to be captured: whether you are using a camera offset (X,Y,Z axis), and the required camera defaults.

Note that FOV and Focal Length are determined by the preset, but you must manually set the Camera Angle within your viewer (you may also need to remember the Focal Length value for stitching your shots together into a single image during post-processing).

To set the camera angle, use either the debug setting – use CTRL-ALT-SHIFT-S to open the debug floater and type CameraAngle – or use the Cam tab in Phototools.

Before starting to take shots with the HUD, there are a some things you’ll need to do:

- Set your preferred windlight and daytime settings.

- Make sure you freeze the clouds – you’ll be taking up to 26 images which will need to be stitched together, and moving clouds could make that a bit of a bugger to do. Use Menu > World > Environment Editor >Sky Presets > Edit Presets or PhotoTools > Clouds and check the scroll lock check boxes

- If you are using the At Avatar position, hide yourself from view – used the supplied alpha mask after removing all attachments or use something like a Vanish gesture. Otherwise, parts of your avatar will appear in some of the shots.

Taking Your Shots

Important Notes:

If you want to upload your images to something like Flickr, VRchive or Facebook, you should use the 360o spherical image type, as this will produce an image in the required 2:1 (width:height) pixel aspect ratio (PAR).

When using the 360o spherical image type, you must set your viewer’s window size to a square aspect ratio. Use Advanced > Set Window Size (click CTRL-ALT-D to display the Advanced menu option, if required) or if you have Phototools, click on Aids > Set Window Size.

Either option will display the Window Size floater (right) – type 1024×1024 in the text box and click Set. Your viewer window will resize itself.

Image Capture Basic Steps

Detailed instructions on taking shots with the HUD can be found in the documentation. The following is a summary of key steps:

- Prepare your viewer windlight, freeze the clouds and, for 360o spherical images, set the viewer window size.

- Wear the camera HUD and select your desired preset.

- Make sure the preset’s Camera Angle is set for the viewer’s camera (see above)

- Select the require camera position in the HUD (remembering to hide yourself if using At Avatar)

- Tap ESC to free your camera, then click the Start button on the HUD to position the camera for your first shot, and pressing

CTRL-‘ (tilde) to capture your first frame to disk.- The first time the camera is used, you’ll be asked for a file name and location for the shot & all subsequent shots will be automatically saved to this location

- Subsequent uses of the HUD will automatically save shots to the last location you used to save snapshots to disk when using the snapshot floater.

- Click the Right arrow on the HUD to advance the camera and use

CTRL-‘ (tilde) to save all remaining shots. After saving the last shot, the camera will automatically exit the capture mode.

You should now have a set of images ready for stitching together.

Selfies / Camera Offsets

Camera Panoramic has a set of presets for “selfies”, allowing you to include your avatar in your images. The process for capturing is the same as above, and the presets are selected by clicking the Offset button on the HUD, then selecting Preset from the dialogue box. The presets are defined by starting position.

Remember, as well, the offset option also allows you to offset the camera in increments of 0.1, 1.0 or 10 metres in the X, Y and Z axis’s – refer to the camera’s documentation for more on this.

Continue reading “The Camera Panoramic: 360 photo fun in Second Life”