The end of April was a busy time for the Lab, with Ebbe Altberg leading a team to both the Collision 2016 tech conference (billed as the “anti-CES”) in New Orleans, which ran from April 26th through 28th, quickly followed by the 2016 Silicon Valley Virtual Reality (SVVR) conference, which took place at the San Jose, California, conference centre between April 27th and 29th.

At both events, Ebbe Altberg gave a presentation which included further images and some video shots from within Sansar, and Collision 2016 has now made these available for viewing within a recording of Ebbe’s presentation which can be found on YouTube, and is embedded below.

As the Collision event is more general tech than VR specific, the first part of the video is more about the potential of VR and the possible VR / AR marketplace in the future. A lot that is familiar to SL users is mentioned, such as the use of immersive spaces for social activities and the potential VR has in areas such as education, design, business, healthcare (the use of Second Life in helping PTSD sufferers is touched upon, something I covered back in June 2014).

For those wishing to cut to the chase, the Project Sansar discussion starts at the 8:18 point in the Collision video.

![[05:52] The Sorbonne University and the Egyptian Ministry of Antiquities worked with Insight Digital to produce a 3D model of an ancient tomb based on digital photography and laser scanners. The initial 50 million polygon model, which the Lab were able to publish through Sansar as an optimised 40,000 polygon model visitors to the experience could visit and interact with and within](https://modemworld.me/wp-content/uploads/2016/05/sansar-collision-1.jpg?w=700)

- Reveals the Lab is now employing around 75 people in RD on Project Sansar (High Fidelity, as a simple comparison, has around 25-30 staff)

- Indicates the broad base of creators and “content partners” invited into the initial platform testing which started in August 2015 is revealed – such as the Sorbonne University / Insight Digital (see above)

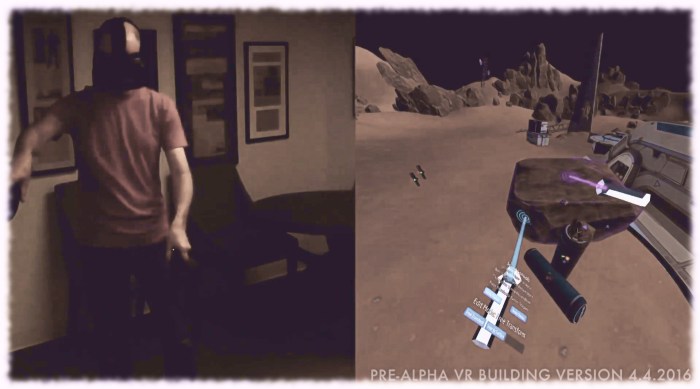

- We get to see both the editing environment and the runtime environment elements of Project Sansar (remembering that the actual editing / layout mode of Sansar is quite separate from the runtime environment where users actually engage with one another once experiences have been “published” to it)

- Reiterates that in using the term “creator”, the Lab isn’t necessarily just referring to content creators as might be the case within SL. Rather the term also encompasses those who purchase original content within the platform and use it to create their scenes and spaces. It is ease-of-use for this broader class of creator that the Lab is currently addressing when it comes to ease-of-use within the platform.

I’m actually curious to know more about the edit mode / runtime split. For example, can an experience still be accessed by others while it is being edited, in the same way a WordPress (to use the Lab’s analogy) page can still be viewed and read by others? If so, what happens when an update for an experience is published?

The video show Project Sansar’s runtime environment commences at the 12:38 mark.

In particular, this reveals a number of locations – including the Mars scene, the Golden Gate seen previously in Project Sansar promo shots, and a camera trip into the ancient Egyptian tomb mentioned above.

My own observations from these video clips are that:

- Project Sansar potentially has a higher level of polish within the runtime environment than High Fidelity has thus far shown

- In there appearance, Project Sansar avatars are at least as good as the more advanced avatars currently found within High Fidelity and certainly more immersively attractive than the more recent iterations of the Altspace VR avatars

- It will be interesting to see how dynamic things like day / night cycles and weather are / will be handled be Project Sansar.

The video includes some hints at the client UI – remember it is still very much a work-in-progress, so there are likely to be many changes.

As it stands, the buttons are ranged against the right side of the screen, in two groups of four, top and bottom, and shown on the right.

Some of these appear reasonably obvious: the landform / terraform tool and Avatar tool at the bottom of the first group of icons, and microphone, help and exit / log-off options in the second group of four.

Doubtless the range of buttons and options available will increase / grow more sophisticated as the UI continues to develop. The current set would appear to simply address the current level of capabilities within the platform at present.

The Lab is apparently still considering whether or not to make the video footage of Sansar more generally available. I’m tending to assume given the overall tone and presentation of the runtime footage, complete with music, that it was put together as a potential promo piece, rather than just a video to show at presentations. So hopefully it will make a broader appearance when the Lab judge the time to be right.

Reblogged this on Windlight Magazine and commented:

Inara reviews the latest Sansar video, which shows us an actual look inside of the upcoming virtual world:

LikeLike

Reblogged this on rektsl.

LikeLike

Funny enough, the icons ranged against the side of the screen reminded me this:

Whichever comes from the same mindsets, or it is instinctive in alphas and pre-alphas when you have few buttons or whatever, an almost full screen / non-invasive / not distracting interface like that isn’t bad, especially for something as visual as VR.

LikeLike

Reblogged this on thomas mcgreevy.

LikeLike