This item is a follow-on from part one, published earlier this week.

More Server News

At the Thursday Beta Grid User Group meeting (Thursday October 11th), and prior to the network optimisation tests, Oskar gave further news in the serve deploys for week 41. On Tuesday 9th October, the main channel received code previously on BlueSteel, which in keeping with Simon Linden’s comments at the Monday Sim / Server UG meeting, Oskar referred to as being, “A pretty small release, just some server crash mode fixes; stability ++.”

On Wednesday October 10th, BlueSteel and LeTigre received a fix to some database queries that were really slow when accessing really large groups (note these were not Baker Linden’s Group Services code, that is being looked at as a deployment in week 42).

Monday 16th October may see some restarts on the grid in order to shuffle some regions onto new hardware, with the servers having more and faster CPU cores, which will increase the number of simulators running on the new servers, but they’ll be running on faster CPU cores.

Interest Lists and Object Caching

The short-version update for this comes from Andrew Linden, speaking at the Server Group meeting on Friday 12th October, “I thought I’d have something working this week… it isn’t quite working right. You can see it not working on Ahern on Aditi…” (!)

He went on more seriously to explain that while the new code is working correctly for the most part, and that rezzing orders should be improved / faster, there are some problems with objects which should be in view of an avatar not showing up and a major issue around teleporting into high ground.

When the latter happens, you effectively arrive “underground” (presumably at the default “ground level” for the simulator – 21 metres in the case of unterraformed land). The simulator then calculates where you should be and moves your avatar appropriately. With the new code, this has the effect of breaking the server’s notion of the camera – where it is and what it can see – which is used to figure out what objects to send to the viewer. This means that the camera itself cannot be moved or updated.

There have been some performance tests on an older version of the code, which have been mixed, as Andrew also explained, “here were two performance tests run on an earlier version. One test (mostly empty region with about 30 avatars running around) showed a slight decrease in performance… about 5% worse. Another test (crowd of avatars NOT looking at a pile of dynamic objects behind them) showed about 40% improvement (less time spent running the interest list). So I went back to the code to try to fix the first test, and I think I’ve got something that will be as good or better all around.”

The code will also see changes as to how the camera behaves and in the resultant level of detail. Andrew is currently working on limiting the distance the camera cam be moved away from the avatar. Note this is not limiting Draw Distance, but limiting the distance the camera can be freely moved independently of the avatar. He’s considering 128 metres to be the likely range. There are two reasons for this.One is to prevent the camera wandering into regions which are more than one neighbouring region away, the other is because as the camera moves laterally, detail levels degrade, because object detail is tied to the avatar’s position (hence why, when you zoom a great distance, buildings and objects may only appear to partially rez, etc.). Under the new system, object detail will be tied to the camera, so that little degradation is experienced. However, in order for this to work, the camera must be kept within a reasonable distance of the avatar; if it is moved too far, the detail will start to degrade once more (presumably because of the volume of data the viewer is trying to handle).

Mesh Deformer

On Thursday 11th October at the Open/Dev meeting, Darien Caldwell outlined her ideas for using base shape info exported from Second Life when uploading rigged meshes.

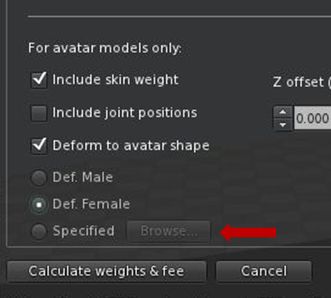

If this works, it will essentially mean that rather than being restricted to using a default base female or male shape when uploading rigged meshes, creators will be able to download a human shape as an XML file (permissions allowing), and then specify this shape when uploading rigged meshes. The basic code for handling the upload with specific avatar shape information has already been added to the deformer by Qarl Fizz, so Darien is focusing on the best way to use it, her work going into a fork of the existing Mesh Defromer project viewer.

Avatar shapes can currently be exported from a viewer via DEVELOP -> AVATAR -> APPEARANCE TO XML (again subject to the permissions system). This saves the avatar shape data as an XML file, which contains the settings from the appearance sliders, and which is automatically saved to your computer (generally to C:\Users[USERNAME]\AppData\Roaming\SecondLife\user_settings for Windows).

To associate an avatar shape .XML file with a mesh, Darien is proposing a further revision to the mesh uploader floater, and has provided an early mock-up as to how it might look.

More work is required the flesh-out this idea, including, as Oz noted at the Open/Dev meeting, making the shape export option more obvious for people to use, which will more than likely see it moved out of the Develop menu, wherein it is currently nested. The .XML file itself is not suitable for use directly in most 3D modelling programmes, so how the exported data might be used with these when creating mesh items remains to be seen. nevertheless, if successful, Darien’s approach may offer a more fine-tuned solution to developing mesh clothing to a range of shapes.

Other items

Viewer and FMOD

The use of FMOD has been the subject of much discussion within the TPV/Dev meetings of late. FMOD is used within the sound system for the Viewer, and until now, Linden Lab has provided a script which pulls library files from an FMOD repository for use in viewer builds. However, following what appears to have been a clean-up of their archives, FMOD have removed the some of the legacy files required for this, as reported in JIRA OPEN-150.

Some viewer developers have already started using FMODex within their builds (e.g Singularity 1.7.0+), which also addresses issues with sound quality as well. Other TPVs are looking at possibly integrating this work into their builds.

It currently appears as though Linden Lab themselves are looking to integrate FMODex, as they see this very much as something which needs to be addressed. Speaking at the TPV/Dev meeting on Friday 5th October, Oz Linden stated: “I got around to forwarding the JIRA on that to our engineering manager for Second Life, and he agreed with me that it is something we should definitely do something about. I’m not sure what the time-table on that will be, it’s going to go into the hopper for the next ‘Things we should do something about, what priority are they compared to all the other things we should do something about’ meeting, which happens weekly.” While openAL has also been suggested as an alternative, it does seem more likely that FMODex will be adopted, something which was hinted at by Oz when talking at the Open/Dev meeting on Thursday 11th October.

Teleport Timeouts

Baker Linden has been looking into the issue of teleport timeouts, and has managed to pin down one cause as a reproducible bug. He’s not sure as to whether it can be fixed, and is currently investigating further as to why it is happening.

I am not happy to hear about the 128m camera limit. I very often cam across a sim to scope out things, in particular to see people. This is useful for say, helping, peacekeeping, and finding events/crowds. (In fact, I did it just now while reading the post, camming to a newbie in a help area who had jumped into a car, to be sure he wasn’t running down other newbies).

LikeLike