How it Will Work

Once the system has been implemented, it will be possible to create specular and normal maps outside of Second Life and then upload them as individual assets just as textures and sound files, etc., are currently uploaded. Once uploaded, the maps can then be applied to in-world objects and object faces in much the same way as textures are currently applied, allowing them to be combined with one another and suitable textures to produce the finished material effect on the object itself.

As with any other content, maps could be created, uploaded and offered for sale (in packs with textures, for example), allowing builders, etc., make use of them. Additionally, LL may offer a selection of normal and specular maps as a part of the system library found in people’s inventories.

Obviously, for all this to work, it means that there are a number of changes that need to be made to Second Life, both on the server-side (storing the new maps as recognised assets, provisioning them to the viewer, etc.), within the viewer, and to the rendering system itself (so it can correctly interpret the data).

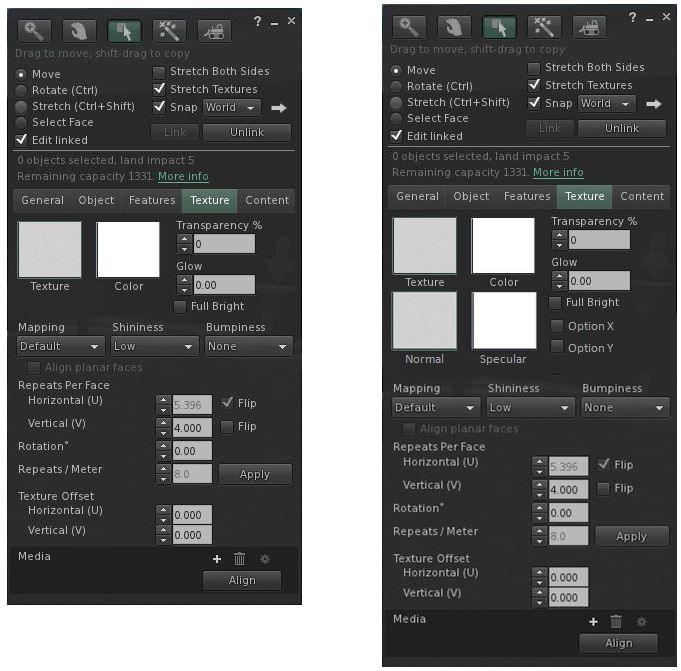

In the case of the viewer, a major area of change will be in the Texture tab of the Build floater, where additional pickers will be needed allow the selection of normal and specular maps. Additional controls will also be required to allow things like the reflectivity / lighting in specular maps to be adjusted, although in the initial release, it is likely the texture, normal and specular maps will be locked to the same rotation, repeats, offsets, and so on (so it will not be possible to flip a normal map vertically without also flipping the other two).

The Creative Process

Specular and normal maps have been on content creators’ wish lists for a long time, but they are capabilities that the Lab have perhaps viewed as being in the “some day” category. Given this idea is largely the result of a proposal from the Exodus team, how did it come about?

“I originally had an idea for encoding material properties in a texture,” Geenz Spad, one of the principal architects for the idea, explains. “I asked Oz Linden if this would be violating any policies, and he told me to try putting together a proposal. We started work on this in February. In less than a month [and dropping the texture encoding idea in the process], we had a functional proof of concept ready to show to the people at the Lab.”

Following this, there was an extensive period of discussion within both camps on how to approach the project, with different proposals being exchanged back and forth relating to how various parts of the feature should work. “The process was quite lengthy, given that we were having to work around an existing architecture and determine what could be used as-is in the existing architecture, and what we’d have to create from scratch,” Geenz goes on. “But the project was green-lit in July, and it’s fantastic to see its announcement to the public!”

Time Scale, Discussions and Feature Requests

No official time scale has been announced for the project as yet, but the initial feature set is now largely defined and it is unlikely that any requests for additional capabilities will be added to the system prior to the initial release. However, a discussion thread has been started in the Building and Texturing sub-forum where ideas for future enhancement to the system can be discussed, and where questions can be addressed.

In addition, a JIRA (STORM-1905) has been created by Oz Linden in which feature requests for future updates to the system can be made, and in which links to detailed information on the functionality and design will be added as they become available.

Related Links

- LL blog post announcing materials processing

- Materials processing video on YouTube

- JIRA for information / feature requests

- Forum thread for discussion

the bumpiness option in the release viewers is already an example of normal maps from a set list of static uuids.

LikeLike

Yup… I just wanted to give a more dynamic example :).

LikeLike

I’ve even seen an article eons ago on a creative resident who “hacked” those already-existing normal maps to apply their own to some objects, with impressive results. A pity I cannot find a reference to that, but it was eons ago, and of course, there is a limit to how many different bump maps one can apply, so this would only work for very limited examples. I always wondered why LL didn’t “finish its job” allowing user-generated bump maps on the viewer; they’re in it since, at least, SL 1.4 (released in June 2004!).

Then again, I suppose these existing bump maps are software-generated (I’m just speculating, I never looked at the code!) while LL very likely will use OpenGL-based normal maps, which will use the GPU’s ability to process them directly.

LikeLike

As with Mesh, I think clothing that can use normal and specular maps will be huge. Imagine for example, a leather corset. There are some lovely looking clothing layer corsets out there, as well as some spectacular Mesh corsets. But imagine being able to apply normal and specular level maps to the clothing layer corset? Suddenly, you have depth, what is in reality a texture mapped to the Avatar skin, can now look like it isn’t simply painted on. For the Mesh corset, well I think your relief example from Wikipedia says it all.

LikeLike

I, too, think mapping the avatar textures would be a *huge* win, and alleviate the need to (mesh) model every piece of clothing.

LikeLike

For sure 🙂 I wrongly assumed this would work on avatars as well. Hmm. Maybe the upcoming server-side baking of avatar textures will allow avatar-side maps, when it’s finished.

The reason for allowing these maps on avatar textures is actually simple. These days, only high-end content creators are able to design and model rigged meshes for avatar clothing. But avatar clothes used to be very simple to do, if you only used a simple template. The results weren’t overly impressive, of course, but it meant that everybody could design their own T-shirts very easily. This was a source of fun and an encouragement for amateurs to personalise their avatars easily. As flexiprims and later sculpties were introduced, amateurs had no choices left to enjoy tinkering with avatar clothing…

As Inara so well explained, even Photoshop can create normal maps and some sort of specular maps as well. This would allow amateurs to dust off their old templates, do some processing, and import a few maps which could give simple texture-based clothing look like it has far more realism and details, thus making amateurs happier again.

With server-side baking of avatar textures, this would also mean that the current issue of downloading “lots of textures” in order to bake a full avatar would disappear. All the many layers, from alpha channels to the three levels of maps, would be baked on the server, and just three resulting textures distributed. So with Project Shining finished, this would be relatively easy to implement without generating much additional viewer-side processing and/or client-server communication.

Of course, not to mention the ability to create truly scary skins with disease pocks and wounds oozing pus… hehe

LikeLike

Inara, i just want to thank YOU for all the careful, thorough and thoughtful ways you manage to explain the new and the strange and even the mesh. Some facts, some examples, some maybes – no high tech jargon, no hyperbole & no harangues.

I wish your blog was required reading for residents.

Or maybe LL should hire you as actual Information Minister.

anyway, sincere thanks and appreciation

LikeLike

Thank you. The feedback is genuinely appreciated.

LikeLike

There is an interesting thing on your last paragraph. Why couldn’t normal/specular maps be encoded as textures as well, thus saving the need to create more types of assets? A pity that was not explained fully. After all, if you can “encode” a mesh using sculpties (or terrain files), maps are comparatively easier than that.

I suppose that the only issue might be related to the way image assets are compressed and recompressed and later decompressed in SL, which might create odd results. We used to have lots of oddities with the first generation sculpties because of that, but at some point, LL fixed all the issues.

Oh well, it’s worthless to speculate (pun intended!).

LikeLike

Which last paragraph? Page 1 I assume :).

TBH, I amassed a whole raft of questions and info, but had to cut things to a point where this article didn’t become a major case of tl;dr :). Also wanted to keep the the basics (as I’m going through a rapid learning-curve as well!).

Still… gives room for a follow-up I guess, unless all the answers pop-up here :).

LikeLike

The texture encoding idea was intended to be more of a hack to get materials working in viewers that would support it. However, once we had the opportunity to just implement them without any weird looking hacks, we opted to just abandon the approach altogether.

Some of the blocking caveats that would have been involved had we proposed it to LL:

– Each tweak you made to a material would require you to upload a new texture (imagine paying L$100 for a material because you made 10 “small” tweaks to it)

– Parsing each material texture asset would have made it impractical in the long run due to parsing overhead (we’d have to figure out what each and every single pixel meant for each and every surface a texture was applied to)

– We would have had to have had some kind of “baking” mechanism to help save people money on uploading (meaning all of your changes would only appear to you until you rebaked the material texture and uploaded it, paying the usual L$10 fee in the process)

In the end we’re going for something significantly less hackish, and easier for everyone to work with.

LikeLike