Windwept (General) May 2015 (Flickr) – blog post

Windwept (General) May 2015 (Flickr) – blog post

Server Deployments, Week 21

As always, please refer to the server deployment thread for the latest updates / news.

On Tuesday, May 19th the Main (SLS) channel received the server maintenance package previously deployed to the three RC channel, comprising:

- Internal server logging changes

- Back-end system bug fixes

- Reply-To email changed in postcard sends

As previously noted in these pages, the “reply-to email changed in postcard sends” relates to changing the way snapshots forwards to e-mail are handled. Until now, the Lab has substituted the user’s e-mail address in the “from” field of snapshots sent to e-mail, rather than displaying the “secondlife.com” address.

However, this added to issues of e-mail originating from “secondlife.com” being treated as spam by a/v software and ISPs. With the new format employed with this change, the sender’s e-mail address is given as the “reply to” address in the snapshot, and the “from” is “no-reply@secondlife.com”, thus avoiding the issue of LL looking like spammers who are forging invalid addresses.

There will be no RC deployment on Wednesday, May 20th.

SL Viewer

The week has not so far seen an RC viewer promoted to release status. If there is any promotion, it would most likely be the Layer Limits RC (currently version 3.7.29.301305). The Experience Tools RC viewer is still awaiting the completion of back-end work, while the Attachment Fixes RC (Project big Bird) currently has an elevated crash rate compared to the current release viewer, which includes a crash-on-exit bug, so further work is required on that RC.

CDN Provider Move

The Lab has been moving between CDN providers, and as a result, some people may have been experiencing particular texture / mesh / avatar rendering delays of late. Commenting on the process at the Simulator User Group meeting on Tuesday, May 19th, Oz Linden said:

We’ve just finished moving from one CDN provider to another, and it may take the caches a little while to catch up. We tried to do it gradually in a way that would be minimally disruptive, but when you’re dealing with as much data as we are, there are no perfect solutions.

One of the cases it is hoped the move will assist is with SL users in Florida (and neighbouring states) in the US who use Mediacom as their ISP, and who have found that there have been what appear to have been issues with Mediacom throttling the service at certain times of the day. Preliminary feedback from users so affected who have been involved in testing with the new CDN provider has been positive.

What Goes Through the CDN, And How

During the CDN conversation Oz reinterated the data that is currently delivered to the viewer through the CDN: textures, world map tiles, avatar baking data, and mesh data. In terms of in-world objects, two distinct operations are taking place:

- Where an object is, how big it is, and so on, comes to the viewer via the simulator, together with the UUIDs fr the relevant objects / textures

- The viewer then uses the UUIDs to fetch the mesh and texture data directly from the CDN.

As previously noted on these pages, this should mean faster loading of things like textures and mesh in-world, as the data is coming from a CDN node that is “local” relative to you, rather than coming to you from the Lab and through the simulator itself. However, experience is showing that for a small number of people, this isn’t always the case, and there can be situations where mesh and texture loading aren’t what might be expected. However, the Lab continues to try to improve things.

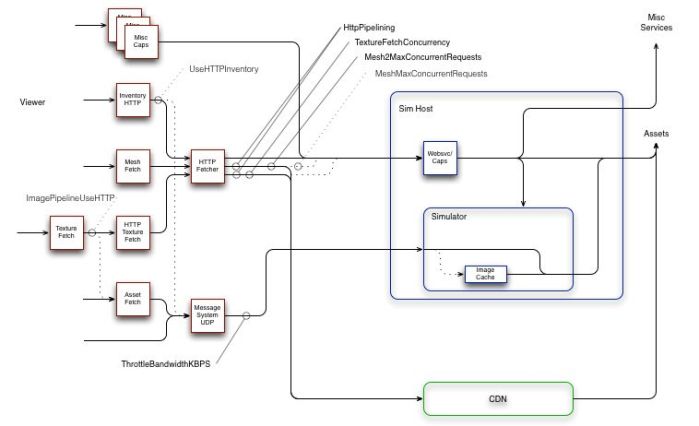

Second Life Network Architecture

Writing on the forums, noted SL photographer Jackson Redstar recently asked meshmaxconcurrentrequests – does anybody know the real setting? In the ensuing debate, Monty Linden offered an updated overview of the SL network architecture.

To borrow from Monty’s explanation:

- On the left, in red, are pieces of the viewer; on the right, in blue are simhost/simulators and other backend services; at the bottom (green) are new CDN services

- Solid lines with arrowheads are communication paths, either UDP or TCP/HTTP; dashed lines are legacy communication paths that are now or soon will be deprecated, obsoleted and/or deleted

- Sold ball-and-stick indicators (e.g. TextureFetchConcurrency) indicate a viewer debug setting and the communication path or paths that setting influences; dashed ball-and-stick indicators (e.g. MeshMaxConcurrentRequests) indicate obsolete debug settings.

Monty goes on to say:

Generally, things are moving in the direction of simplification and less resource conflict. The mesh and texture HTTP traffic, which is usually the greatest load, tends to part ways with the UDP traffic a few network hops after a user’s router or modem. Lacking TCP’s throttling mechanism, UDP often wins in a fight (give-or-take the efforts of fairness algorithms along the path). Allowing UDP to overrun the path between viewer and simulator does still degrade the experience and the bandwidth setting remains an effective tool for avoiding this problem.

Other settings should generally be left alone. A lot of bad advice was spread around in the community in an effort to work around throughput problems. We’re trying to undo that history and get back on track with more typical (albeit aggressive) HTTP patterns.

Viewer Caching

During the Simulator UG meeting, Oz repeated a call he originally made at a TPV Developer meeting recently, asking that if there is developer wishing to volunteer for a “deep dive” into viewer caching, he’d like to hear from them.

While interest list updates made key changes to how the viewer’s cache is used, there are numerous issues which appear to be viewer-side caching related, so a deep investigation into the code could go further towards improving things.

One long-standing issue, which is thought to be caching related, is If someone uses a texture rezzed in-world same texture for a group profile image or their avatar profile image or in a profile pick, the object will never fully load the texture.

So, if you’re a developer willing to looking into viewer-side caching, Oz would like to hear from you.