It’s been a while since I’ve reviewed any of the official SL viewers from LL, so here’s a quick round-up of recent releases.

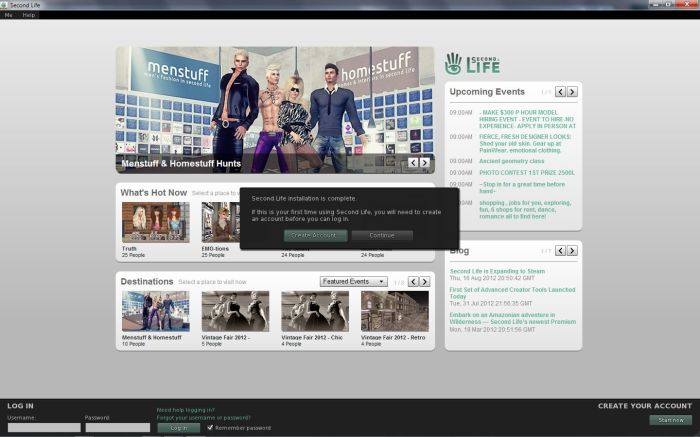

New Log-in / Account Creation Prompt

The new account creation prompt, displayed if the viewer does not locate any user settings files on a computer, and which first appeared in the 3.4.1.263582 release (August 16th), now looks to be the default option for all development / project viewers. It is part of both the most recent Mesh Deformer project viewer (3.4.1.264215, August 31st), and the new HTTP Group Services project viewer (3.4.1.264495, September 7th). However, it has yet to filter through to either the Beta or release versions of the Viewer.

Mesh Deformer Project

August 31st saw a new release of the Mesh Deformer (3.4.1.264215), which includes a revised mesh uploader floater with deformation options for the male and female shape.

According to Nalates Urriah, the new options invalidate all test items so far provided for the project, and new samples are now required, although no comments to this effect appear to have been made on the JIRA or elsewhere, so they may have been confined to a user group meeting. Details on how to provide test items can be found in Oz’s forum post on the matter. The JIRA (STORM-1716) for this project is still open for viewing and comment.

Group Services Project Viewer

As noted this week, there is now a Group Services (group management) project viewer available for testing the new HTTP group management service. The server-side of this project has yet to be rolled-out to Aditi, so it cannot be tested as yet. However, Baker Linden, who is developing the service, is apparently updating the JIRA, SVC-4968 (which is still publicly viewable) with the project status, and has indicated he’ll post when the server-side elements are available for testing.

The viewer is available in Windows, Linux and OSX flavours.

HTTP Libraries Viewer

The HTTP Libraries project viewer (3.3.3.262585) appeared on July 27th. This project, which Monty Linden is driving, is currently aimed at improving texture downloading and rezzing as a part of the Shining project.

Texture loading / rezzing would appear to be significantly faster on this viewer compared with other offerings, although there also appear to be what might be placebo effects associated with it. Some people have reported that floaters, etc., seem to load more slowly, and some have reported various performance improvements outside of the HTTP library changes.

Beta Viewer and Release Viewers

The Beta viewer (3.4.0.264445 at the time of writing) continues to be focused on pathfinding, with fixes and updates going into it on a weekly basis – which is why the pathfinding tools have yet to release a release version of the viewer. The removal of JIRA numbers from the release notes now means that tracking issues previously being watched is that much harder (even if the JIRA themselves are no longer accessible, having the JIRA numbers still visible facilities easier identification of issues being specifically tracked).

Similarly, the release viewer (3.3.4.264214) appears to be focused on bug fixes and general improvements, with the release notes currently benefiting from the retention of JIRA numbers, making scanning for specific fixes easier.

Performance

I carried out basic performance tests on the viewers listed above using Dimrill Dale as my sample sim, during a period when there were the same number of avatars in the region (5, including myself). Tests were carried out in the same location on the region, looking in the same direction and with the same viewer settings (e.g. Graphics on high, Draw Distance set to 260m, using default time-of-day, with deferred disabled / with deferred enabled and lighting set to Sun/Moon+projectors, etc.). While all such tests are rough-and-ready, these did tend to show that all of the viewers offer the same performance on my default PC (see the sidebar panel on the right of this blog’s home page for system details). My results were:

- Non-deferred: 18-20fps

- Deferred with Sun/Moon+ projectors: 8-10fps

Similar figures were also obtained using the current Firestorm and Exodus viewers, although with deferred enabled and Sun/Moon+projectors active, Firestorm was slightly down at an average of 17-18fps, the other viewers being closer to an average of 19-20fps.