Tuesday, April 27th saw High Fidelity move to an “open beta” phase, with a simple Twitter announcement.Having spent just over a year in “open alpha” (see my update here), the company and the platform has been making steady progress over the course of the last 12 months, offering increasing levels of sophistication and capabilities – some of which might actually surprise those who have not actually set foot inside High Fidelity but are nevertheless will to offer comments concerning it.

Tuesday, April 27th saw High Fidelity move to an “open beta” phase, with a simple Twitter announcement.Having spent just over a year in “open alpha” (see my update here), the company and the platform has been making steady progress over the course of the last 12 months, offering increasing levels of sophistication and capabilities – some of which might actually surprise those who have not actually set foot inside High Fidelity but are nevertheless will to offer comments concerning it.

I’ve actually neglected updating on HiFi for a while, my last report having been at the start of March. However, even since then, things have moved on at quite a pace. The website has been completely overhauled and given a modern “tile” look (something which actually seems to be a little be derivative rather than leading edge – even Buckingham Palace has adopted the approach).

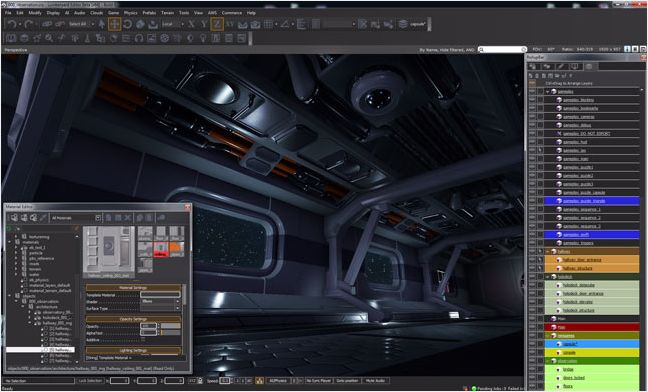

The company has also hired Caitlyn Meeks, former Editor in Chief of the Unity Asset Store, as their Director of Content, and she has been keeping people appraised of progress on the platform at a huge pace, with numerous blog posts, including technical overviews of new capabilities, as well as covering more social aspects of the platform, including pushing aside the myth that High Fidelity is rooted in “cartoony” avatars, but has a fairly free-form approach to avatars and to content.

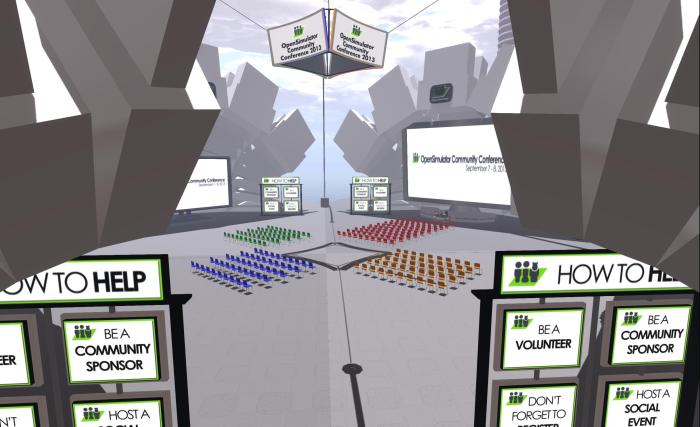

High Fidelity may not be as sophisticated in terms of overall looks and content – or user numbers – as something like Second Life or OpenSim, but it is grabbing a lot of media attention (and investment) thanks to it have a very strong focus on the emerging ranging to VR hardware systems, and the beta announcement is timed to coincide with the anticipated increasing availability of the first generation HMDs from Oculus VR and HTC. Indeed, while the platform is described as “better with” such hardware and can be used without HMDs and their associated controllers, High Fidelity describe it as being “better with” such hardware.

I still tend to be of the opinion that, over time, VR won’t be perhaps as disruptive in our lives as the likes of Mixed / Augmented Reality as these gradually mature; as such I remain sceptical that platforms such as High Fidelity and Project Sansar will become as mainstream and their creators believe, rather than simply vying for as much space as they can claim in similar (if larger) niches to that occupied by Second Life.

And even is VR does grow in a manner similar t that predicted by analysts, it still doesn’t necessarily mean that everyone will be leaping into immersive VR environments to conduct major aspects of their social interactions. As such, it will be interesting to see what kind of traction high Fidelity gains over the course of the next 12 months, now that it might be considered moving more towards maturity – allowing for things like media coverage, etc., of course.

Which is not to share the capabilities aren’t getting increasingly impressive, as the video below notes – and just look at the way Caitlyn’s avatar interact with the “camera” of our viewpoint!

.